Why Should I Even Use Search Console?

What is Google Search Console?

I feel like everyone knows what Google Analytics is for, but no one knows what Google Search Console does or has even heard of it. To make it simple, it is your control panel for directing and monitoring how Google sees your website. For example, say you deleted a page and forget to perform a redirect. The next time Google crawls your site Search Console will let you know that you now have a 404 error. This alert will show you exactly where to add a redirect. The tool saves you from users bouncing or leaving your website. Search Console helps create a better experience for your website users - and a tool we use often to keep our Search Engine Optimization client's websites performing at it's best.

So How Do I Use Google Search Console? I have broken this article down by each Search Console section. Some sections may not apply to your site(s), but it's good to know how to use each tool for the future. Connecting Search Console to your site it easy. It seamlessly connects with Google Analytics so installing extra tags is not needed.

Messages

This is pretty straight-forward. Some messages you will receive are new account alerts, newly added users, and pages that can no longer be found.

Search Appearance

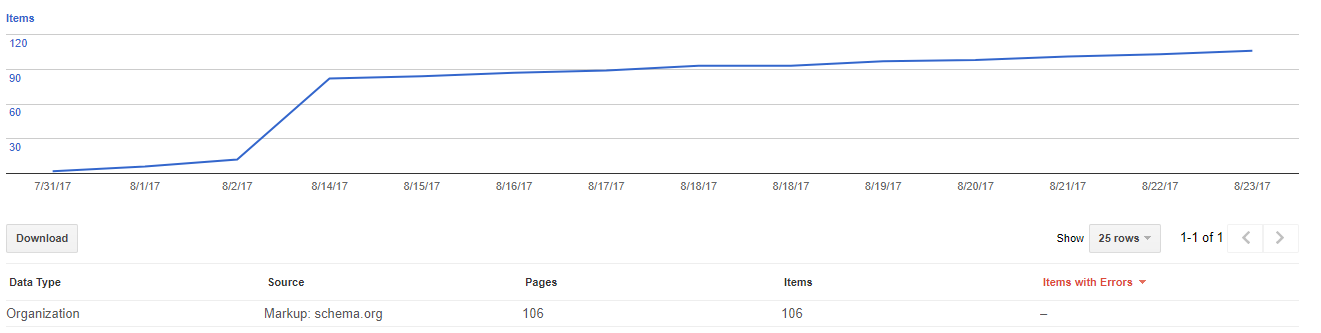

Structured Data

Structured data helps search engines return more informative results for users. In return Google may show structured data on it's search engine like pricing and ratings. This section in search console will show what types of structured data you have implemented along with errors.

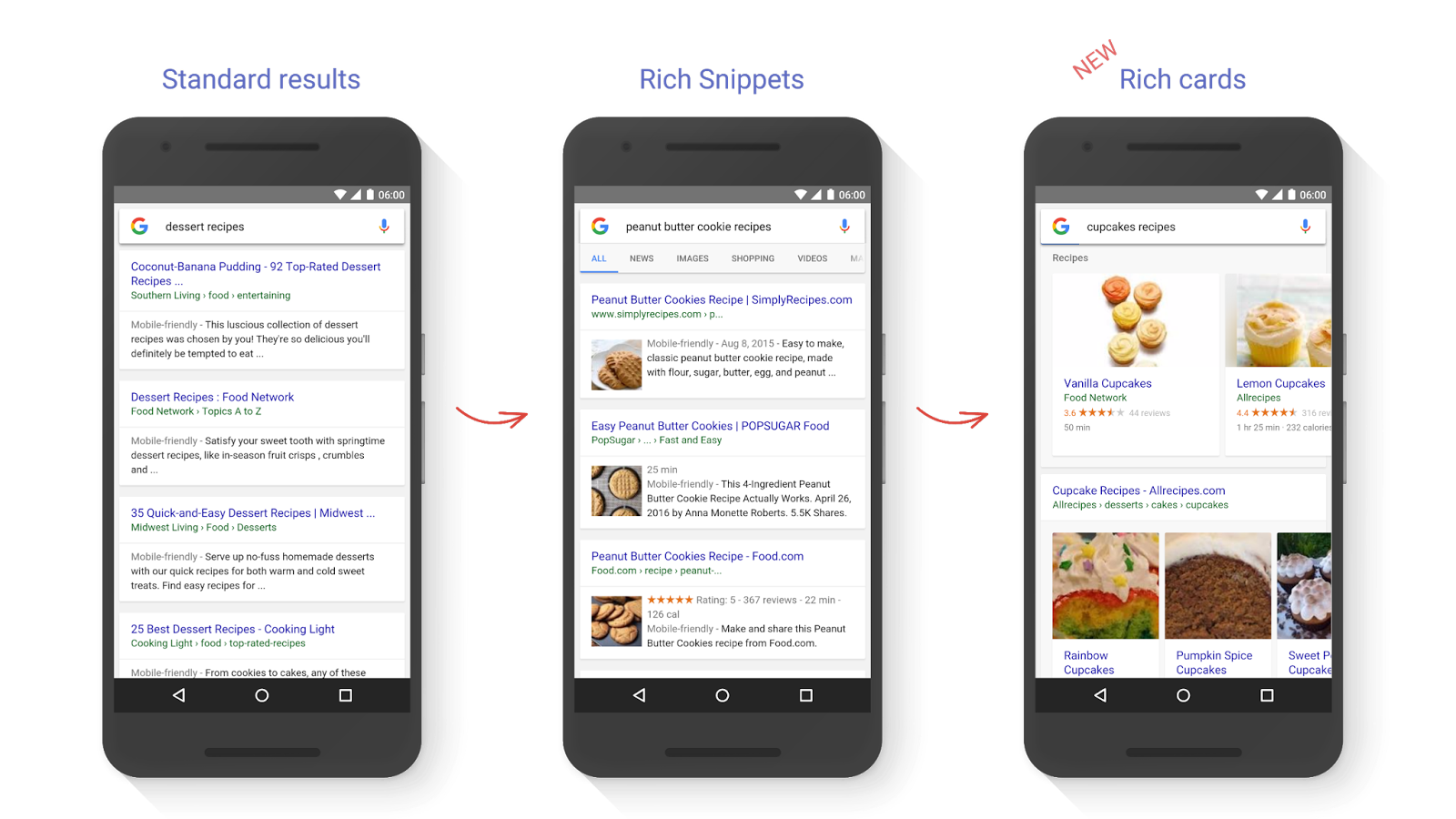

Rich Cards

Rich cards are another form of structured data. Recipe search results are probably the most popular Rich Cards on Google. They can be seen as cards on a carousel that can be easily swiped through for easy browsing. This section will show which Rich Cards are showing on Google as well as any errors.

Data Highlighter

This section is a tool that makes adding structured data or schema easier for those who aren’t the most technical. This alternative that can add tagging to data fields with the press of a button. I find it to be clunky and inconsistent, but as always, if you find it to be helpful and resourceful let us know!

HTML Improvements

If you’re ever wondering if you have duplicate meta descriptions or missing title tags, then this is the section for you! This tool is great because it shows the type of error and exactly where they are so that you can fix them instantly. This is a great section to start with when you want to make sure your pages are fully optimized.

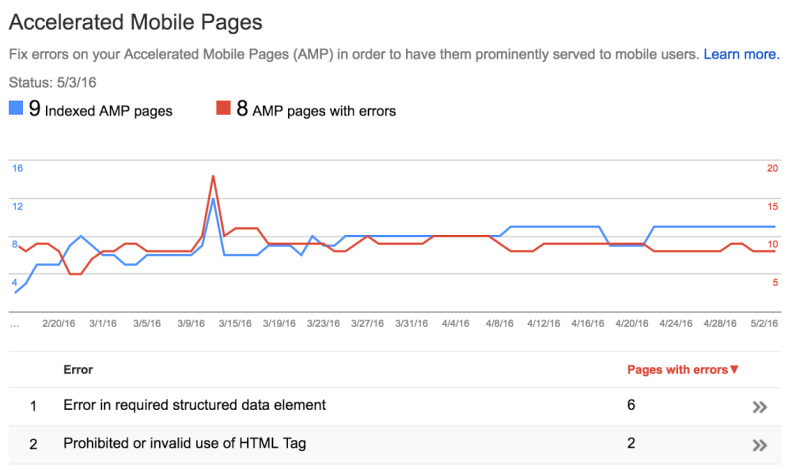

Accelerated Mobile Pages

Accelerated Mobile Pages or AMP are pages on your website that uses Google’s open source inititive for loading

extremely fast web pages. AMP pages even on slow networks. This section will let you know if you have any errors that would restrict certain AMP pages from showing on Google.

Search Traffic

Search Analytics

This part of Search Console will tell you how well organic traffic is performing on Google. It will show impressions, click-through-rates, and keyword positions. With more and more keywords landing under the dreaded “Not Provided”, this section will let you get the most out of your keyword report. This section has evolved to where you can actually drill down to view keywords for each page, by devicesm and type of search performed. The only restriction is that historical data only goes back 90 days. Anything longer than that you must use Google Analytics or other software.

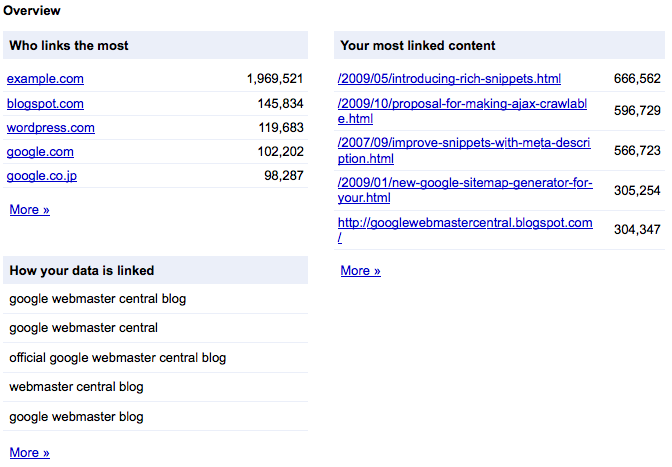

Links to Your Site

Backlinks or external links are an important indicator to a search engines algorithm that your site is reputable. Each linked site is ranked differently based upon popularity and reputation or authority. The more links you have with a good reputation the more Google will see your web site as an authoritative site. This external link report will show any abnormal links that could potentially be spam and create negative SEO. Take a look and see if there are any low-quality websites linking to your site. It’s good to start with url’s containing the most links. Manually investigate the quality of sites linking to you 100+ times from one page. If they are spammy websites you can use the Disavow Tool, but be sure to use this tool with caution. One bad move could knock rankings down dramatically.

Internal Links

The internal link report is the perfect tool to when optimizing the on-page link structure of your website. You will want to make sure that the most important pages on your site receive the most links. Doing this helps important pages rank higher, ensuring that the viewer lands on the most accurate page.

Manual Actions

This report is one of the most important reasons why you should be using Google Search console. If your web site violates Google’s guidelines, you will receive a notification in the Manual Actions Report. We hope that you never see a message here, but if you do you will have to submit a reconsideration request. The reconsideration requests take a good amount of time and quick response from your team. Let’s say you are penalized with unnatural link building to your site. Google will want data. They will want to see proof that you have reached out to websites multiple times asking them to remove your web sites links from their site. Google will also want your link removal campaign, your disavow file, and a letter explaining the steps you took to fix the problem. It’s an important reason why you shouldn’t be buying links or directory listings. It will come back to bite you.

International Targeting

If you have a website that caters to multiple languages, you want the search engine results show with the same language as the person searching. To do this, a specific tag has to be added to your website telling google which URL pertains to which language. This report will let you know if the tags are not performing correctly.

Mobile Usability

Mobile usability is becoming more important every day. Mobile search now taking up more than half of all searches. With everyone have a mobile device with them at all times it only makes sense that web sites should be built for mobile devices. That is why Google has come out with the Mobile Usability Report.

Here are some things that the report will check:

- Content wider than screen

- Clickable elements too close together

- Viewport not configured.

If your mobile site is designed correctly, you will see this message.

![]()

Google Index

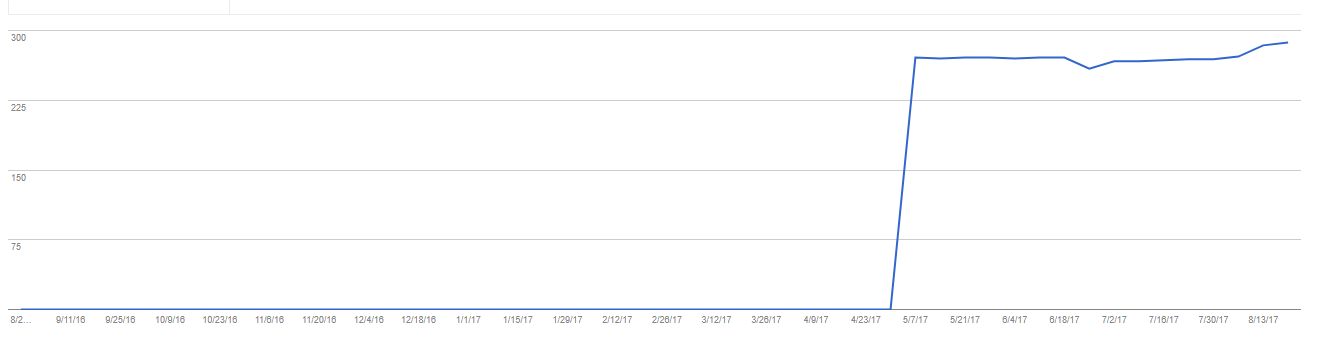

Index Status

The Index Status report is straight forward. This feature shows how many pages on your website are indexed or show on Google. If you are continuously adding new products or blog posts you should see the index status rise. If you see a drop when you have not removed pages, then this is a good signal that something is wrong and to look further into it.

Blocked Resources

Blocked resources are any site elements that are blocking Googlebot from crawling. This is very important because you want to make sure that the Googlebot can see everything that contains content that is valuable to to your SEO.

Remove URLs

The page removal tool is a temporary way to remove certain pages from showing on Google. Google will relist the page after 90 days if the proper steps aren’t taken. Proper steps included 301 redirects.

Crawl

You will find errors in the Crawl Report when Googlebot can’t find a page from an internal or external link. This can easily be fixed by fixing the error at its source, or if needed, adding a 301 redirect to move the link equity to another page.

Possible Crawl Errors:

- DNS (Domain Name System) errors

- Servers errors

- Soft 404 errors

- 404 errors

Crawl Stats

This report lets you how Google crawls your website.

It’s broken down by:

- Pages Crawled Per Day

- Kilobytes Download per Day

- Time Spend Downloading a Page (in milliseconds)

If you see dramatic spikes and you haven’t added or removed new pages recently, you should examine your HTTP requests. Having more than 20 requests on any given page can dramatically slow down a page and hurt your SEO.

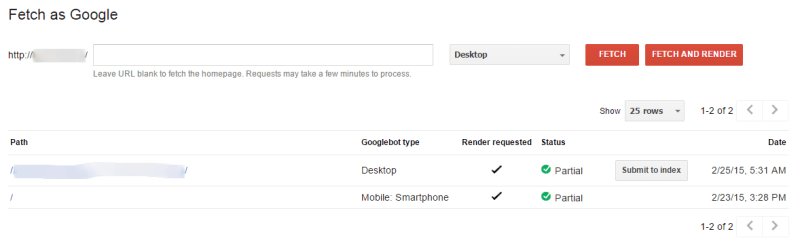

Fetch as Google

Fetch is one of my favorite tools for pushing a new page to Google. It will take time to rank, but this will give it a little push instead of waiting for Google to recrawl and find the page.

Other uses for Fetch include:

- Updating old webpages

- HTTP Migration

- Canonical tag implementation

- Robots.txt file update

- New design

Another part is the Fetch and Render that will show you exactly how Google sees your site. This will inform you if dynamically generate content is hidden or not.

Robots.txt Tester

This is an important tool because without testing your file, you may block pages or your entire site from showing on Google. If you make a change to the robots.txt or just want to test the existing file, enter the URL that you want to test. This will show you if the page is allowed or blocked

Sitemaps

Sitemaps on your website are another great way to tell search engines which pages to crawl. Depending on how often you update the site, it would be smart to update the sitemap frequently. This will ensure that Google will find every page. The Sitemaps report will show you how many pages were submitted and how many were indexed. It is completely normal to have less indexed then submitted. If you see that there are extremely less pages than submitted then you would want to look into it further.

URL Parameters

The URL Parameters tool is another one that you do not want to touch unless you know how it works. A parameter is a part of the url that classifies the purpose of the page. Older sites, with highly dynamic URLs use this tool to prevent duplicate content penalties. Like some other tools, incorporating the wrong parameter can prevent pages from being crawled. For example, on some eCommerce sites, adding the parameter ‘p’ could remove all product listings from Google potentially costing a business millions.

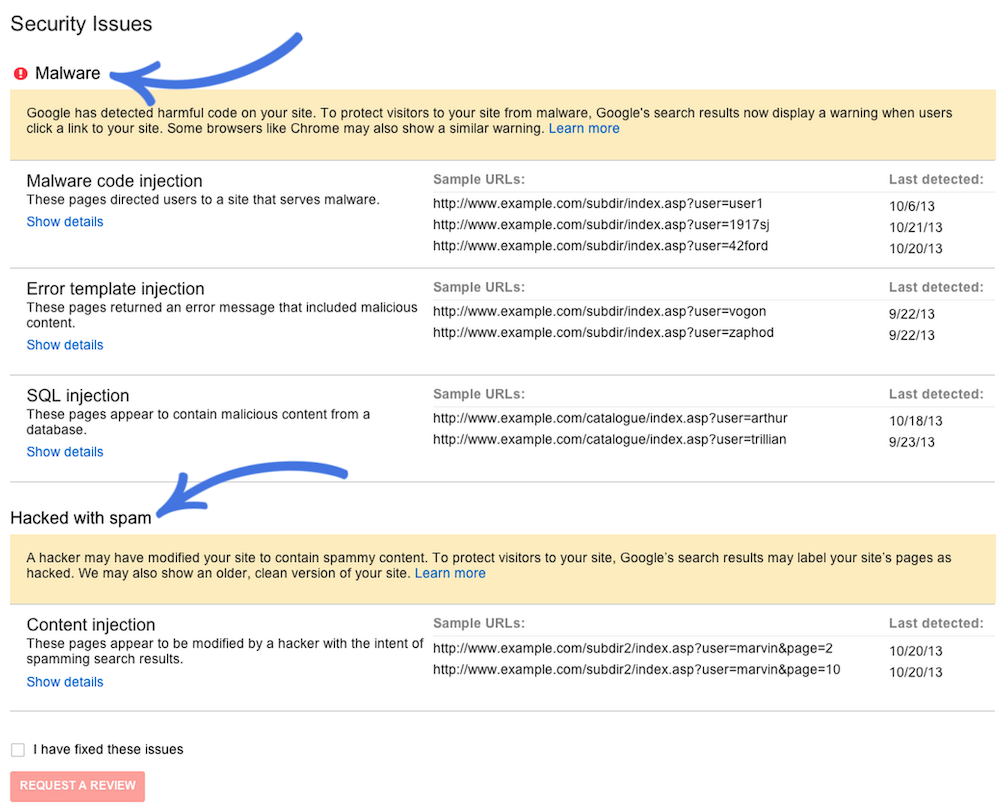

Security Issues

This is a simple report that let you know if your site has signs of being hacked or compromised. Here is an example of a website with multiple security issues.

Web Tools

Web tools will bring you to another page that will show the following:

- Structured Data Testing Tool

- Structured Data Markup Helper

- Email Markup Tester

- Other resources for Page Speed, Google My Business, and more.